Europe Can Regulate AI. But Can It Shape Pro-Worker AI?

The EU has moved faster than most to regulate artificial intelligence. But the harder question is whether Europe has enough leverage over the AI stack to steer innovation towards worker-augmenting systems rather than importing worker-substituting ones designed elsewhere.

By Maria Mexi, Senior Policy Advisor, TASC Platform

Europe may be writing the rules of artificial intelligence, but it is not yet shaping the technology on its own terms. The EU’s AI Act, which entered into force on 1 August 2024, confirmed the continent’s ambition to act as a global rule-maker. Yet rule-making is not the same as power. And as the debate shifts from AI adoption to the political economy of AI - who designs it, who controls its inputs, who captures its gains - that distinction becomes impossible to ignore.

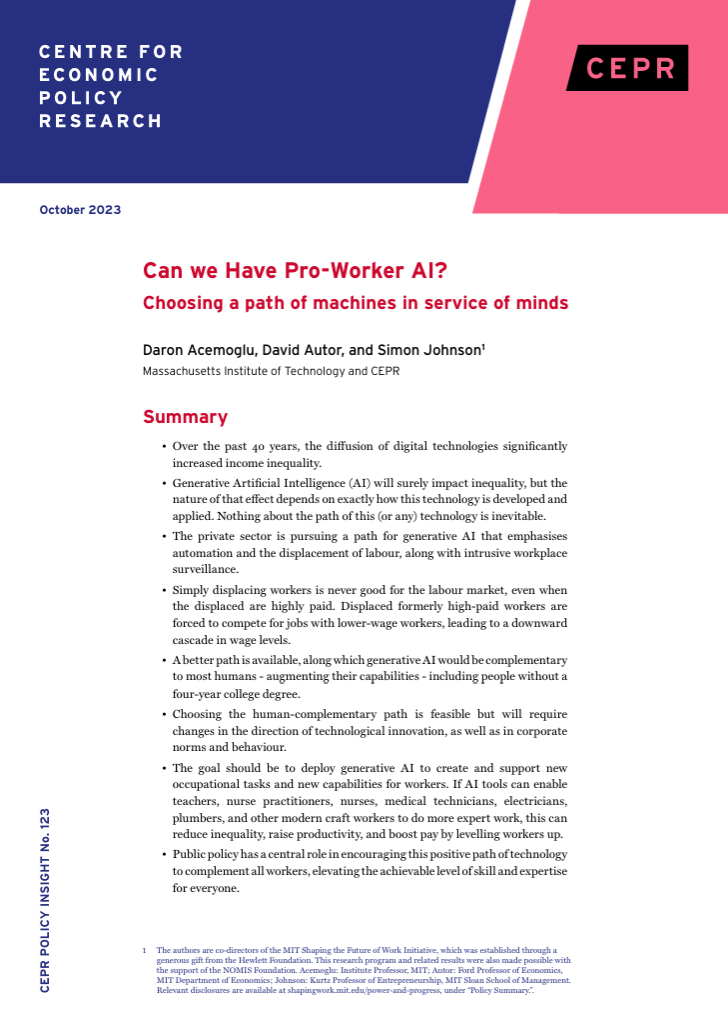

The most consequential question in AI today is not how fast the technology diffuses but in whose interest it is designed. The emerging case for “pro-worker AI,” advanced by Daron Acemoglu, David Autor and Simon Johnson, draws a sharp line: pro-worker technologies expand human capabilities and make expertise more valuable; worker-substituting technologies replace tasks wholesale and concentrate gains at the top. That distinction is not abstract. It determines whether a hospital deploys AI that sharpens a clinician’s diagnostic judgment or one that replaces the consultation altogether; whether a factory floor tool augments an engineer’s skill or reduces the role to monitored task-completion.

Crucially, the pro-worker path is not the default. It has to be designed, incentivised and institutionally supported. This is precisely where Europe’s dilemma begins; because shaping pro-worker AI is not merely a question of regulating harmful uses once systems reach the workplace. It requires leverage over the upstream decisions that determine what kinds of systems are built in the first place. And there, Europe remains far weaker than its rhetoric of digital sovereignty suggests.

The layered problem of

AI power

The layered problem of AI power

AI is not a single market but a layered system of power. Control is exercised across at least five strategic layers: semiconductors, compute and cloud infrastructure, foundation models, data and applications. Europe retains strengths in regulation and downstream deployment. But across the upstream layers where strategic leverage is concentrated, it is structurally dependent on others.

Start with semiconductors. The EU’s Chips Act acknowledges that Europe holds roughly 10 per cent of the global semiconductor market, with nearly 80 per cent of relevant suppliers headquartered outside the Union. Move to cloud and compute: a recent European Parliament analysis reports that AWS, Microsoft Azure and Google Cloud account for about 70 per cent of EU cloud infrastructure, while European providers’ combined share had fallen to roughly 13 per cent by 2022. These are not merely market statistics. They determine who controls scalable compute, pricing power, switching costs and the environments in which models are trained and deployed.

Foundation models add a further layer of concentration. Stanford’s 2025 AI Index reports that nearly 90 per cent of notable AI models in 2024 originated in industry, up from 60 per cent the year before. Europe is not absent from advanced research, but frontier model development is increasingly concentrated in a handful of corporate actors with privileged access to compute, data and global deployment channels.

The data layer deserves particular attention, because it is where Europe’s vulnerabilities intersect most directly with the pro-worker question. Training data - its composition, its labelling, its embedded assumptions about tasks, roles and performance - shapes what AI systems learn to optimise. Yet the largely invisible labour that makes AI usable, from data annotation to reinforcement learning from human feedback, is predominantly performed under precarious conditions far beyond Europe’s regulatory reach - a pattern documented across AI supply chains from sub-Saharan Africa to Southeast Asia, and theorised as a constitutive “human infrastructure” without which the appearance of machine intelligence could not be sustained. Meanwhile, the rich sectoral and administrative datasets that European firms, public institutions and social partners hold - in healthcare, manufacturing, public services and education - remain largely fragmented and underutilised. A Europe that cannot pool and govern its own data assets will find its position in the foundation model layer weakening further, regardless of what its regulations say.

Why this is a labour problem, not only an industrial one

Why this is a labour problem, not only an industrial one

Why does all this matter for workers? Because what happens upstream increasingly determines what happens downstream in the workplace. Control over chips, cloud, models and data shapes which tasks are automated, how performance is measured, how managerial authority is exercised, how surveillance is normalised and how the productivity gains from AI are distributed. A Europe that lacks leverage over the upstream AI stack will have correspondingly less control over the terms on which work itself is reorganised.

This is what makes the pro-worker AI debate a structural question, not merely a normative one. Acemoglu and Johnson’s work on “task displacement” versus “task reinstatement” shows that the direction of automation follows from investment incentives, tax structures, and the relative bargaining power of labour and capital, not from any inherent logic of the technology. Pro-worker AI requires deliberate design choices: domain-specific systems built around professional expertise, interaction models that reduce blind reliance rather than deepen dependency, and tools that support skill development over time.

Some will argue that regulation alone provides sufficient leverage; that the “Brussels effect” can impose pro-worker standards on the global AI industry just as GDPR reshaped data practices worldwide. That confidence is misplaced. Data protection rules could be layered onto existing digital services without altering the underlying production system. Pro-worker AI, by contrast, requires intervening in the design and incentive structure of the technology itself. Regulation can set boundaries on how AI is deployed; it cannot, by itself, determine what AI is built to do. For that, Europe needs leverage it does not yet have.

Building leverage:

what it actually requires

Building leverage: what it actually requires

If Europe cannot rely on regulation alone, what would genuine leverage require? Progress across three interlocking domains.

First, strategic infrastructure. Europe’s existing instruments - the AI Act, the Chips Act, the Data Act, the European High Performance Computing Joint Undertaking - need to be more deliberately aligned around a shared strategic objective. That objective is not technological self-sufficiency, which is neither realistic nor desirable in deeply interdependent systems. It is the cultivation of sufficient capacity in compute, data governance and model development to create genuine alternatives to structural dependency. Public procurement is one powerful, underused lever: European public authorities spend over €2 trillion annually on procurement , and conditioning a meaningful share of that spending on requirements around explainability, worker impact assessments and skill-complementarity would reshape vendor incentives in ways that no ethics principle alone can achieve.

Second, institutional governance with sectoral depth. The AI Act’s requirements for high-risk system assessment create an important opening, but only if they are developed with specificity. Annex III explicitly identifies employment and workforce management as a high-risk domain, covering recruitment, performance monitoring, task allocation and termination decisions. That designation matters less if conformity assessments remain generic checklists. What is needed is sectoral precision: assessments developed with industry bodies, workforce institutions and social partners that distinguish between AI systems that expand professional judgment and those that merely reduce it to monitored task completion. Several member states are already moving in this direction: the Netherlands’ 2024 supervisory framework assigns sector-specific AI oversight in healthcare, while France and Germany are pursuing sectoral AI governance initiatives in public services. These national experiments should inform the harmonised European approach, not follow it.

Third, collective voice. Here Europe holds an asset the United States does not: a dense institutional architecture of social dialogue - works councils, sectoral collective bargaining, tripartite consultation - that can engage with AI governance as a matter of workplace rights, not merely regulatory compliance. Collective agreements in Germany’s metalworking sector and across Scandinavian public services have already begun to address algorithmic management, AI monitoring and skills adjustment as subjects of negotiation. The European Works Councils Directive, recently revised, creates an opportunity to extend information and consultation rights to AI-related decisions that restructure work across borders. If pro-worker AI means anything institutionally, it means that workers and their representatives have a structured role in determining how AI enters the workplace, not simply a right to be informed after the fact.

The multilateral dimension

Europe’s challenge is also a global one. The Global Digital Compact, adopted in September 2024 as part of the Pact for the Future, frames digital governance in terms of inclusion, capacity-building and narrowing digital divides. That framing matters because it implicitly recognises that AI governance is about unequal capability and unequal power, not only about ethics and safety. For Europe, the Compact creates space for coalition-building with the Global South around shared interests: constraining the concentration of AI infrastructure, building indigenous model capacity, and ensuring that governance norms reflect a broader range of labour market realities than those prevailing in Silicon Valley or Shenzhen.

But multilateral principles alone will not undo the concentration of cloud, compute and frontier model development in a small number of firms and countries. The real question - for Europe and for the wider international community - is how to remain open to cooperation without locking in structural dependency, and how to support innovation without surrendering control over the infrastructures that shape work, production and public life.

The real challenge

Europe’s real challenge is not simply to regulate AI’s risks. It is to build enough strategic and institutional leverage across infrastructure, governance and collective bargaining to ensure that AI raises the value of human work rather than diminishes it.

The institutional architecture for pro-worker AI exists. What is still lacking is the political will to treat strategic leverage as a precondition for effective governance, not an afterthought to it. Until that changes, pro-worker AI will remain less a governing strategy than a regulatory aspiration; carefully articulated, widely endorsed, and substantially unenforceable.

The AI, Foresight & Creativity Programme brings together policymakers, technologists, creatives, and researchers to explore how emerging technologies can be shaped with intention. Through foresight tools, creative practice, and multistakeholder exchange, the programme advances human-centred approaches to AI—supporting more inclusive, responsible, and future-ready systems of work and governance.

📬 Interested in exploring this work with us? Get in touch.